Power large-scale AI training and inference workloads using NVIDIA H200, B200, and A100 GPUs in a secure cloud environment.

Choose from a variety of GPU, CPU, RAM, and storage combinations - tailored to match your exact AI workload.

Don't wait for hours. Get your server ready in under 60 seconds with our automated provisioning engine.

Your data belongs to you. We provide isolated networks and hardware-level encryption for every instance.

Pay only for what you use with flexible hourly billing - no long-term commitments.

Access the latest NVIDIA GPU architectures optimized for machine learning, deep learning, and AI inference. We bridge the gap between development and production.

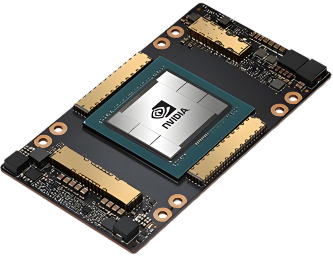

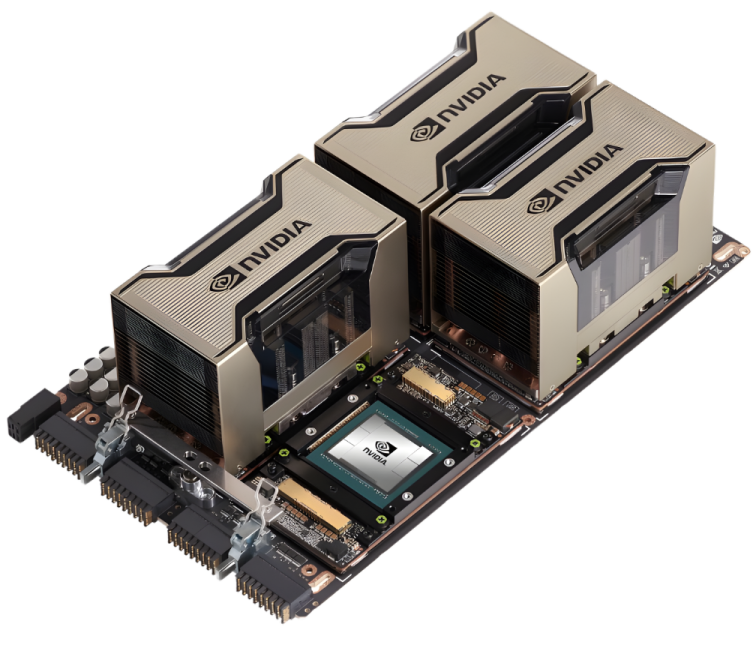

NVIDIA H200, B200 (Blackwell), and more - high-performance GPUs engineered for advanced AI training, inference, and large-scale workloads.

Seamlessly deploy PyTorch, TensorFlow, CUDA, and other AI frameworks with a smooth setup experience.

Optimized memory architecture designed for AI training and data-intensive workloads.

Full control over your cloud GPU environment with flexible configurations designed for scale.

Supported Environments

No complex onboarding. Deploy, connect, and scale with a few clicks.

Select from H100, A100 or RTX instances based on your budget and workload needs.

Our automated system provisions your VM with pre-installed CUDA drivers in <60s.

Access via secure SSH or Jupyter Notebook. Start training your models immediately.

Pay only for the seconds you use. Scale up to clusters or terminate with one click.

Our infrastructure is purpose-built to handle the most demanding computational tasks.

Train massive neural networks and run complex ML experiments with ease.

Real-time object detection and video analytics at enterprise scale.

Fine-tune Llama, Mistral, and other large language models effectively.

Accelerate your creative workflow with high-performance GPU rendering.

Get instant GPU power with effortless setup, complete access, and reliable storage for all your workloads.

The rapid growth of AI and machine learning has fundamentally changed how infrastructure is designed and managed.

Gaming has come a long way, from bulky physical consoles and cartridge-based systems to powerful PCs and now fully cloud-powered experiences.

CUDA is often treated as portable by default. Developers write kernels, compile them, and assume they’ll run anywhere an NVIDIA GPU is present.