Introduction

Modern GPUs are no longer used only for graphics. They are now essential for scientific computing, artificial intelligence (AI), deep learning, data analytics, and high-performance computing (HPC). NVIDIA GPUs dominate these fields, mainly because of two important processing units inside them: CUDA cores and Tensor cores. This blog provides a detailed technical comparison of CUDA cores and Tensor cores.

Importance of Understanding CUDA vs Tensor Cores

Choosing a GPU is not only about memory size or clock speed. The internal architecture matters. CUDA cores and Tensor cores serve different purposes, and knowing this helps in several ways:

1. Correct GPU selection

AI training needs strong Tensor core support, while scientific simulations rely more on CUDA cores.

2. Better workload optimization

Code can be written or tuned to use the most efficient hardware unit.

3. Performance improvement

Proper use of Tensor cores can reduce training time from weeks to days.

4. Cost efficiency

Paying for Tensor cores makes sense only if the workload can use them.

5. Avoiding misconceptions

Tensor cores do not replace CUDA cores. They complement them.

Without this understanding, GPU capabilities are often underused or misused.

Brief History of CUDA Cores and Tensor Cores

Evolution of CUDA Cores

CUDA cores were introduced by NVIDIA in 2006 with the CUDA programming model. Before CUDA, GPUs were mostly fixed-function devices for graphics. CUDA transformed GPUs into general-purpose parallel processors.

Over time, CUDA cores evolved to support:

- Floating-point operations (FP32, FP64)

- Integer operations

- Logical and control operations

- Scientific and engineering workloads

Each GPU generation increased the number of CUDA cores and improved their efficiency.

Introduction of Tensor Cores

Tensor cores were introduced later, in Volta architecture (2017). The main motivation was the rapid growth of deep learning. Neural networks rely heavily on matrix multiplications, which traditional CUDA cores handle but not efficiently enough.

Tensor cores were designed as specialized hardware units to accelerate matrix operations used in AI.

Since Volta, Tensor cores have evolved significantly in Supported data types, Precision modes, Performance, Flexibility. They are now a core feature of modern NVIDIA GPUs.

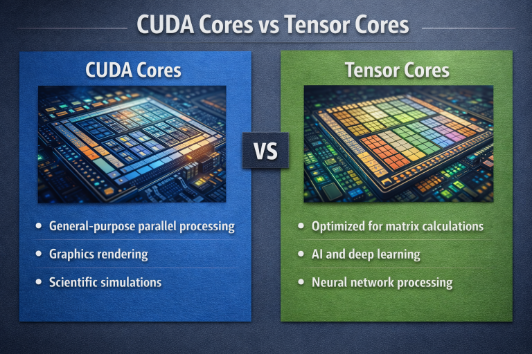

Definition and Role of CUDA Cores

CUDA cores are general-purpose parallel processing units inside NVIDIA GPUs. They are similar in concept to CPU cores but much simpler and more numerous.

Each CUDA core can perform Floating-point arithmetic, Integer arithmetic, Logical operations and Control instructions. CUDA cores follow the SIMT (Single Instruction, Multiple Threads) model. This allows thousands of threads to run in parallel.

Role of CUDA Cores

CUDA cores handle Graphics rendering, Physics simulations, Scientific computing, Financial modeling, Signal processing, Non-AI workloads, and Parts of AI workloads that are not matrix-heavy.

They are flexible and programmable for a wide range of tasks.

Definition and Role of Tensor Cores

Tensor cores are specialized accelerators designed specifically for matrix multiply-accumulate (MMA) operations.

A basic Tensor core operation looks like: D = A × B + C

This operation is central to deep learning and linear algebra.

Role of Tensor Cores

Tensor cores are optimized for Deep learning training, Deep learning inference, Large language models (LLMs), Transformer models, and Matrix-heavy AI workloads.

They provide extremely high throughput for these operations, far beyond what CUDA cores alone can achieve.

Core Architectural Differences

CUDA Core Architecture

- Scalar and vector arithmetic

- Flexible instruction execution

- Works on individual data elements

- Optimized for control flow and diverse workloads

- Lower throughput for large matrix operations

Tensor Core Architecture

- Block-based matrix computation

- Fixed operation patterns

- Very high parallelism

- Limited instruction flexibility

- Optimized only for matrix math

In short:

- CUDA cores are flexible

- Tensor cores are specialized

Supported Data Types and Precision Levels

CUDA Core Data Types

CUDA cores support FP32 (single precision), FP64 (double precision), INT32, INT64, Boolean and logical operations

FP64 is especially important for Scientific simulations, Weather modeling, Physics, Engineering analysis.

Tensor Core Data Types

Tensor cores support FP16, BF16, FP8 (newer architectures), INT8, INT4 (in some cases).

These lower-precision formats are ideal for AI because neural networks tolerate small numerical errors. Tensor cores generally do not support FP64.

Types of Operations Handled

CUDA Cores handle Addition, Subtraction, Multiplication, Division, Bitwise operations, Conditional logic and Memory operations. They are suitable for general arithmetic.

Tensor Cores handle Fused matrix multiply-accumulate, Fixed-size matrix tiles and Deep learning kernels. They are not general-purpose units.

Performance Characteristics and Throughput

CUDA cores provide moderate computational throughput while maintaining high numerical precision, making them suitable for a wide range of general-purpose workloads. They offer flexible execution and strong support for complex control flow, which makes them effective for control heavy applications such as simulations, scientific computing, and traditional HPC tasks.

In contrast, Tensor cores deliver extremely high throughput by being highly optimized for matrix-based computations. They are designed specifically to accelerate matrix multiply accumulation operations and can achieve performance that is orders of magnitude faster than CUDA cores for AI and deep learning workloads. Although Tensor cores operate at lower precision, this reduced precision is generally acceptable for neural networks and does not significantly impact model accuracy.

In AI workloads, Tensor cores can deliver 10× to 100× higher performance than CUDA cores.

CUDA Cores and Tensor Cores Inside a Streaming Multiprocessor (SM)

Modern NVIDIA GPUs organize hardware into Streaming Multiprocessors (SMs).

Each SM contains CUDA cores,,Tensor cores,,Load/store units, Shared memory and Warp schedulers

Both core types work together. CUDA cores handle control logic, data movement, and non-matrix operations and Tensor cores accelerate matrix math.

This coexistence allows efficient workload execution.

Programming and Software Stack Support

Modern NVIDIA GPUs are powerful not only because of their hardware, but also because of the strong software ecosystem built around them. CUDA cores and Tensor cores are fully supported through a layered programming and software stack. This stack allows developers to work at different levels, from low-level control to high-level AI frameworks, depending on their needs and expertise.

The software stack is designed to make GPU programming efficient, flexible, and scalable across different workloads.

CUDA Programming Model

The CUDA programming model is the foundation of GPU computing on NVIDIA hardware. It allows developers to write programs that run directly on the GPU using parallel execution.

Low-Level Control: CUDA provides fine grained control over GPU hardware. Developers can manage Thread and block organization, Memory hierarchy (global, shared, registers), Synchronization between threads, Execution order and parallelism.

Explicit Kernel Programming: In CUDA, computation is written as kernels. A kernel is a function that runs on the GPU and is executed by thousands of threads in parallel. Explicit kernel programming is especially useful when existing libraries do not fully match the workload requirements.

Access to Both CUDA Cores and Tensor Cores: CUDA provides access to both CUDA cores and Tensor cores within the same programming environment. CUDA cores are used for general-purpose instructions and Tensor cores are used for matrix multiply-accumulate operations.

CUDA Libraries

NVIDIA provides highly optimized libraries that sit on top of CUDA. These libraries hide hardware complexity while delivering near-maximum performance.

cuBLAS – Linear Algebra Library: cuBLAS is NVIDIA’s GPU-accelerated implementation of BLAS (Basic Linear Algebra Subprograms). It provides Matrix multiplication, Vector operations, Linear system solvers. cuBLAS automatically uses CUDA cores for general precision and Tensor cores for supported low-precision matrix operations. This makes cuBLAS suitable for both traditional HPC workloads and modern AI workloads.

cuDNN – Deep Learning Primitives: cuDNN is a GPU-optimized library for deep learning operations. It includes highly optimized implementations of Convolution layers, Activation functions, Pooling operations, Normalization layers and Recurrent neural network components.

CUTLASS – Tensor Core Programming: CUTLASS (CUDA Templates for Linear Algebra Subroutines) is a low-level library designed for custom high-performance matrix operations. CUTLASS is commonly used by Library developers, Framework developers and Performance engineers. It enables maximum Tensor core utilization but requires deep knowledge of GPU architecture.

TensorRT – Inference Optimization: TensorRT is an inference optimization framework designed to deploy trained AI models efficiently. TensorRT provides Graph optimization, Kernel fusion, Precision calibration, Tensor core acceleration, and Reduced inference latency. It converts trained models into highly optimized execution engines that fully use Tensor cores during inference. TensorRT is widely used in production AI systems.

AI Framework Support

High-level AI frameworks make GPU acceleration accessible without requiring low-level programming knowledge.

TensorFlow: TensorFlow automatically detects GPU capabilities and uses Tensor cores when possible. Features include Automatic mixed-precision training, Optimized GPU kernels and Integration with cuDNN and cuBLAS. Developers can enable Tensor core acceleration with minimal code changes.

PyTorch: PyTorch provides dynamic computation graphs and strong GPU support. It offers Automatic Tensor core usage, Mixed-precision training via AMP and seamless integration with CUDA libraries. PyTorch is widely used for research and production AI workloads.

JAX: JAX focuses on high-performance numerical computing and machine learning. Key features include Automatic differentiation, XLA compiler optimizations, Efficient GPU execution and Transparent Tensor core usage. JAX is popular for large-scale AI research and experimentation.

Automatic Use of Tensor Cores

Modern AI frameworks automatically use Tensor cores when supported data types are used (FP16, BF16, FP8), Matrix dimensions are compatible and Mixed-precision training is enabled. This automation reduces development complexity and ensures high performance without manual optimization.

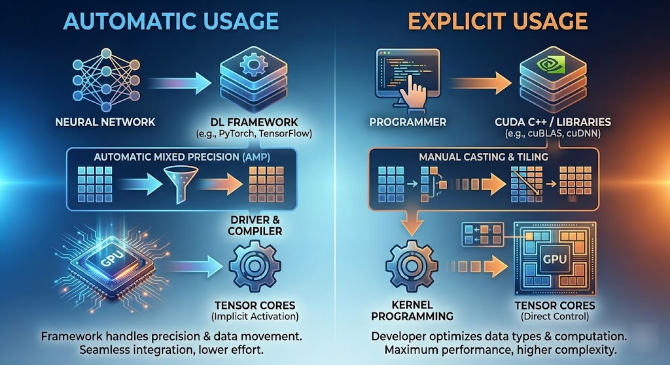

Automatic vs Explicit Tensor Core Usage

Automatic Usage: Most modern deep learning frameworks TensorFlow, PyTorch, and others have built-in support for Tensor Cores. They are designed to detect and utilize Tensor Cores automatically without requiring any changes to the user’s code.

Explicit Usage: For advanced users or those working on highly customized machine learning models, there’s an option to manually optimize the use of Tensor Cores. This is where explicit usage comes into play. Explicit control over Tensor Core operations allows fine-grained optimizations, enabling the user to maximize performance for specific workloads.

Impact of mixed-precision computing on performance and accuracy

Mixed-precision computing is a technique that involves using different numerical precision for different operations within a computational task. In deep learning, this often means using low precision for the majority of computations (such as matrix multiplications) and higher precision for final accumulations to ensure numerical stability and accuracy.

Mixed precision uses low precision for computation and high precision for accumulation. Tensor cores are designed for mixed-precision workflows.

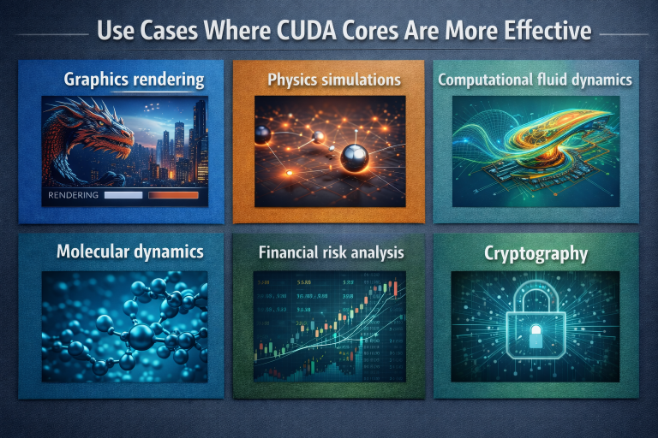

Use Cases Where CUDA Cores Are More Effective

While Tensor Cores are optimized for matrix-heavy computations commonly found in deep learning tasks, CUDA cores are designed for more general-purpose parallel computing.

CUDA cores are better for:

- Graphics rendering: Graphics rendering is one of the primary use cases for CUDA cores. In fact, CUDA itself was originally developed to accelerate rendering tasks. CUDA cores are particularly effective in handling the parallel processing of graphical elements.

- Physics simulations: Physics simulations often involve complex calculations that must be performed in parallel. Examples include simulating the behavior of particles, the interaction of forces, or the motion of bodies under various conditions.

- Computational fluid dynamics: CFD requires flexibility and the ability to handle massive amounts of data, making CUDA cores ideal for accelerating the parallel computations needed for such simulations.

- Molecular dynamics: Molecular dynamics often involves time-sensitive calculations and simulations that need both precision and the ability to scale computations across large numbers of particles, making CUDA cores a perfect fit.

- Financial risk analysis: Financial models often require high precision and speed, particularly when handling large datasets of financial transactions or market behavior, making CUDA cores a valuable asset for these applications.

- Cryptography: Cryptographic algorithms require high precision and security, with large numbers of computations that benefit from parallel execution, qualities that CUDA cores excel at.

These workloads need precision and flexibility.

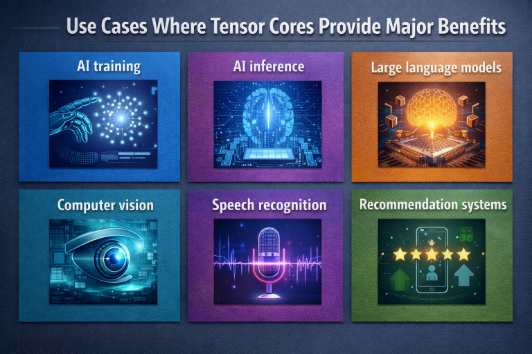

Use Cases Where Tensor Cores Provide Major Benefits

Tensor cores excel in:

- AI training: AI training involves learning from large datasets to improve machine learning models. Tensor Cores accelerate matrix operations in neural networks, speeding up training and reducing time to convergence.

- AI inference: AI inference is the process of using a trained model to make predictions. Tensor Cores speed up real-time processing of inputs, enabling quick and efficient real-time predictions.

- Large language models: LLMs like GPT or BERT require intensive matrix computations. Tensor Cores accelerate tasks like self-attention in Transformers, which speeds up training and inference.

- Computer vision: Computer vision tasks like image classification, segmentation, and object detection rely heavily on convolutions. Tensor Cores speed up these operations for faster real-time processing.

- Speech recognition: Speech recognition systems convert spoken language into text,

requiring matrix operations. Tensor Cores help accelerate these tasks, reducing latency in real-time transcription. - Recommendation systems: Recommendation systems use matrix factorization and deep learning models to suggest personalized content. Tensor Cores accelerate these computations, improving recommendation speed and efficiency.

Impact on Deep Learning Training and Inference

Tensor Cores, NVIDIA’s specialized hardware for accelerating deep learning operations, have a major impact on both training and inference processes in AI systems.

Training Time

Tensor cores significantly reduce:

- Training time: Tensor Cores speed up matrix operations, significantly reducing the time needed to train deep learning models.

- Energy consumption: Faster training means less GPU usage, reducing overall energy consumption during model training.

- Hardware cost per experiment: With Tensor Cores, training experiments cost less by requiring fewer hours of GPU usage, saving on hardware costs.

Inference Latency

Tensor cores improve:

- Throughput: Tensor Cores process matrix operations faster, increasing the number of inferences that can be made simultaneously, ideal for large-scale deployment.

- Latency: Faster processing of predictions results in lower latency, essential for real-time applications.

- Real-time performance: Tensor Cores ensure real-time performance for systems like autonomous vehicles or speech-to-text, where quick responses are necessary.

Limitations of Tensor Cores

While Tensor Cores are highly efficient for certain tasks, they do have limitations. They are not suitable for all types of workloads.

Tensor cores are not suitable for:

- FP64 workloads: Tensor Cores are optimized for FP16 and FP32, not FP64 (double precision). FP64 is used in scientific computing where high precision is needed. Tensor Cores cannot perform FP64 operations efficiently.

- Non-matrix algorithms: Tensor Cores excel in matrix operations (e.g., matrix multiplication) but are not good for non-matrix algorithms. Algorithms like sorting, searching, and graph traversal do not use matrices and are better suited for traditional CPU/GPU processing.

- Control-heavy code: Tensor Cores are not effective with control-heavy code, where frequent decisions and branching are required. Tensor Cores work best when tasks are predictable and can be processed in parallel. Conditional branching can cause inefficiencies.

- Irregular data patterns: Tensor Cores perform poorly on irregular data patterns, like sparse matrices or non-contiguous data. Tensor Cores are optimized for dense, regular data, and sparse data can lead to underutilization of their power.

Common Misconceptions

Tensor Cores are powerful, but there are several misconceptions about their use and capabilities.

1. Tensor cores replace CUDA cores: False. Both are needed. False.

Tensor Cores are specialized for accelerating matrix operations, particularly in deep learning, but CUDA cores are still necessary for general-purpose parallel computing.

Tensor Cores can’t replace the versatility and power of CUDA cores for other tasks that aren’t matrix-heavy.

2. Tensor cores are only for AI GPUs: False. Many GPUs support both.

Tensor Cores are present in several NVIDIA GPUs, including those from the GeForce and Quadro series, not just AI-specific models. Many GPUs from the Turing and Ampere architectures include Tensor Cores for improved performance in various tasks. You don’t need an AI-specific GPU to take advantage of Tensor Cores.

3. More Tensor cores means better performance always: False. Depends on workload.

Tensor Cores are optimized for matrix-heavy tasks, especially in deep learning. If your workload doesn’t rely on these types of operations, more Tensor Cores won’t offer much benefit. If your application uses control-heavy code, sparse data, or non-matrix algorithms, the extra Tensor Cores may not make a significant difference.

4. CUDA cores are obsolete: False. They remain essential.

CUDA cores are still the backbone of general-purpose computation on GPUs. They handle a wide range of tasks, from graphics rendering to general computation and parallel processing. Tensor Cores are optimized for specific matrix operations in AI and deep learning, but CUDA cores are still critical for non-AI workloads.

Hardware Selection Guidance

Choosing the right GPU depends largely on the workload you intend to run.

Choose GPUs based on workload:

- AI and deep learning: For AI and deep learning tasks, such as model training and inference, GPUs with strong Tensor Core support are ideal. These workloads rely heavily on matrix operations, which Tensor Cores are specifically designed to accelerate.

- Scientific computing: For workloads that demand double-precision floating-point operations (FP64), such as scientific computing (e.g., simulations, physical modeling, high-performance computing), high FP64 CUDA performance is a top priority.

- Mixed workloads: For mixed workloads that involve a combination of AI tasks, scientific computations, and general-purpose computing, a balanced GPU offering both CUDA cores and Tensor Cores is the best option. These workloads could include tasks like image processing, video encoding, or machine learning, which require flexibility in handling various types of computations.

- Inference: For inference tasks, such as running trained AI models to make predictions (e.g., real-time image recognition, speech-to-text), Tensor Cores and memory bandwidth are the two most critical factors.

Understanding the workload is more important than raw specs.

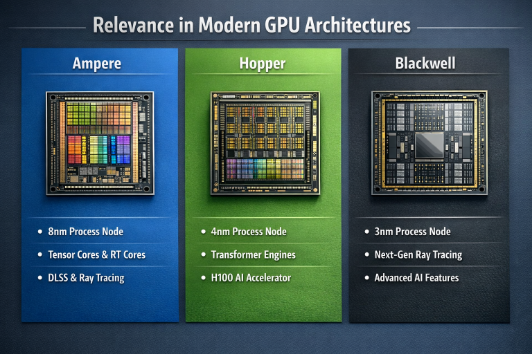

Relevance in Modern GPU Architectures

Ampere: The NVIDIA Ampere architecture marked a significant leap forward in AI acceleration. It introduced the third generation of Tensor Cores, which brought substantial improvements over previous generations.

- Improved Tensor Cores: Ampere’s Tensor Cores were redesigned to deliver up to 20x the performance for AI workloads compared to the previous Volta architecture.

- TF32 Support: A key innovation was the introduction of TensorFloat-32 (TF32), a new math mode that provides the range of FP32 with the performance of FP16, accelerating AI training without requiring code changes.

- Better Mixed Precision: Ampere further optimized mixed-precision training, allowing models to be trained faster by using lower-precision formats (like FP16) where possible, without sacrificing accuracy.

Hopper: The Engine for Transformers: Building on Ampere, the NVIDIA Hopper architecture was designed with a specific focus on accelerating the massive language models that are transforming AI. It introduced the fourth generation of Tensor Cores, with several key enhancements.

- Transformer Engine: Hopper’s most significant addition is the Transformer Engine, a combination of software and hardware that automatically casts and scales data to the most appropriate format, dramatically speeding up transformer model training.

- FP8 Support: The Transformer Engine leverages the new FP8 data format, which offers a perfect balance of range and precision for training large language models, enabling even higher performance.

- AI-Focused Enhancements: Hopper’s Tensor Cores include numerous other optimizations, such as faster activation functions, to further accelerate common operations in deep learning.

Blackwell: The NVIDIA Blackwell architecture represents the latest step in this evolution, designed to power the next generation of large-scale AI systems.

- Advanced AI Acceleration: Blackwell’s Tensor Cores are expected to provide even greater performance for both training and inference of massive AI models.

- Improved Efficiency: A key focus of Blackwell is on energy efficiency, enabling data centers to pack more compute power into the same power envelope.

- Designed for Large-Scale AI Systems: Blackwell is architected from the ground up to be the building block for massive, interconnected AI supercomputers, facilitating the training of trillion-parameter models.

Each generation improves Tensor cores while keeping CUDA cores essential.

Conclusion

CUDA cores and Tensor cores serve different but complementary roles inside modern NVIDIA GPUs.

- CUDA cores provide flexibility, precision, and general-purpose computing

- Tensor cores provide massive acceleration for matrix-based AI workloads

Neither replaces the other. Together, they enable GPUs to handle a wide range of workloads efficiently. Understanding their differences allows better GPU selection, better performance, and better use of modern computing hardware.

A100 SXM4 40GB

A100 SXM4 40GB