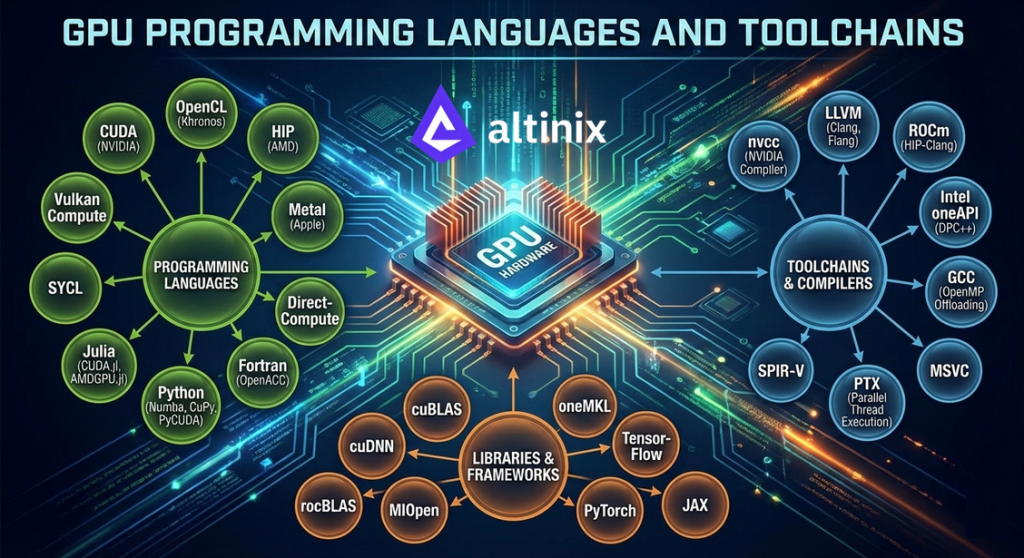

GPU Programming Languages and Toolchains

GPU Programming Languages and Toolchains are essential in the realm of high-performance computing (HPC), artificial intelligence (AI), and machine learning (ML). GPUs (Graphics Processing Units) are specialized hardware designed to perform parallel computations efficiently, making them particularly useful for tasks like graphics rendering, simulations, deep learning, and other high-performance computations. To harness the power of GPUs, developers use specific programming languages, libraries, and toolchains designed for parallel computing.

1. GPU Programming Languages

These languages are specifically designed or adapted to leverage the parallel architecture of GPUs for general-purpose computing.

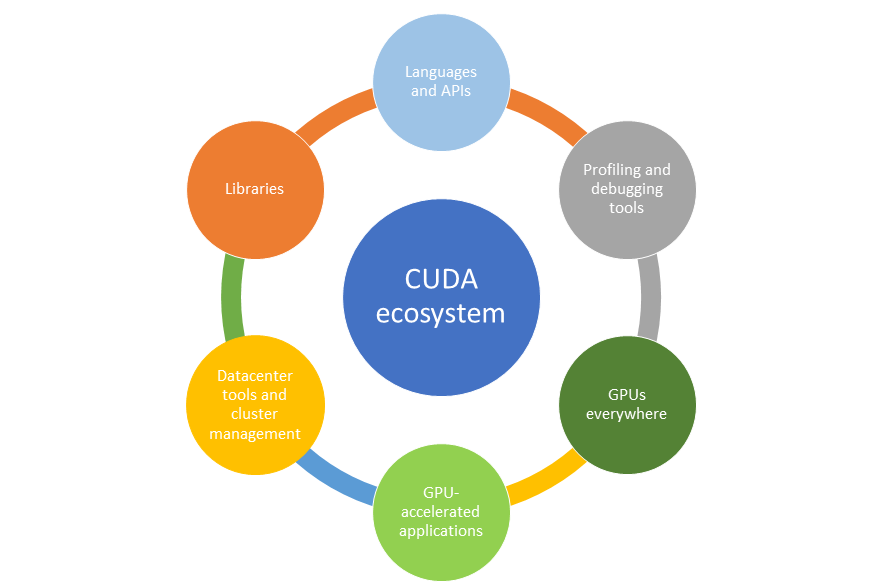

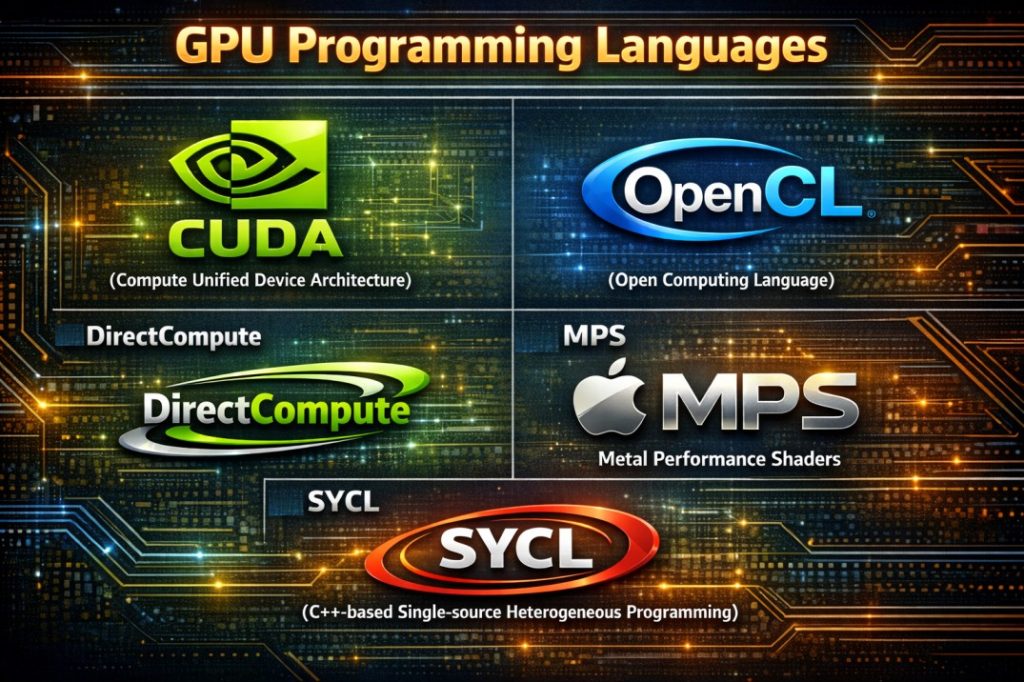

a. CUDA (Compute Unified Device Architecture)

- Language: CUDA is NVIDIA’s proprietary parallel computing platform and programming model. It allows developers to write software that executes across GPUs and CPUs.

- Syntax: CUDA is an extension of C/C++, and it uses specific keywords like __global__, __device__, and __host__ to specify functions that run on the GPU or CPU.

- Toolchain: CUDA provides a suite of compilers (nvcc), debuggers, profilers, and libraries (like cuBLAS, cuFFT, cuDNN).

- Libraries: NVIDIA provides libraries for deep learning (cuDNN), linear algebra (cuBLAS), and more, making CUDA particularly suitable for AI/ML tasks.

- Key Use Cases: Deep learning and AI model training, High-performance computing (HPC) and Scientific simulations and data analytics.

b. OpenCL (Open Computing Language)

- Language: OpenCL is an open-source framework for writing programs that execute across heterogeneous platforms (CPUs, GPUs, and other accelerators).

- Syntax: OpenCL is based on C99, with some extensions for parallel programming, including kernels that run on GPUs or other accelerators.

- Portability: One of OpenCL’s key advantages is that it works across hardware from different vendors (NVIDIA, AMD, Intel, etc.).

- Libraries: OpenCL provides an extensive set of APIs for task management, memory management, and synchronization.

- Key Use Cases: Cross-vendor GPU applications, Image and video processing and Embedded and mobile GPU workloads

c. DirectCompute

- Language: DirectCompute is a part of the Microsoft DirectX suite, designed for general-purpose GPU programming on Windows platforms.

- Syntax: It leverages HLSL (High-Level Shading Language), a language originally designed for writing shaders in graphics programming.

- Platform: DirectCompute is primarily used for Windows, leveraging GPUs from both NVIDIA and AMD.

d. Metal Performance Shaders (MPS)

- Language: Metal is Apple’s graphics and compute API for iOS, macOS, and other Apple devices.

- Syntax: MPS uses Metal Shading Language (MSL), which is similar to C++.

- Platform: Metal is optimized for Apple hardware (e.g., iPhone, Mac, and iPad). It’s great for high-performance compute tasks on macOS/iOS devices.

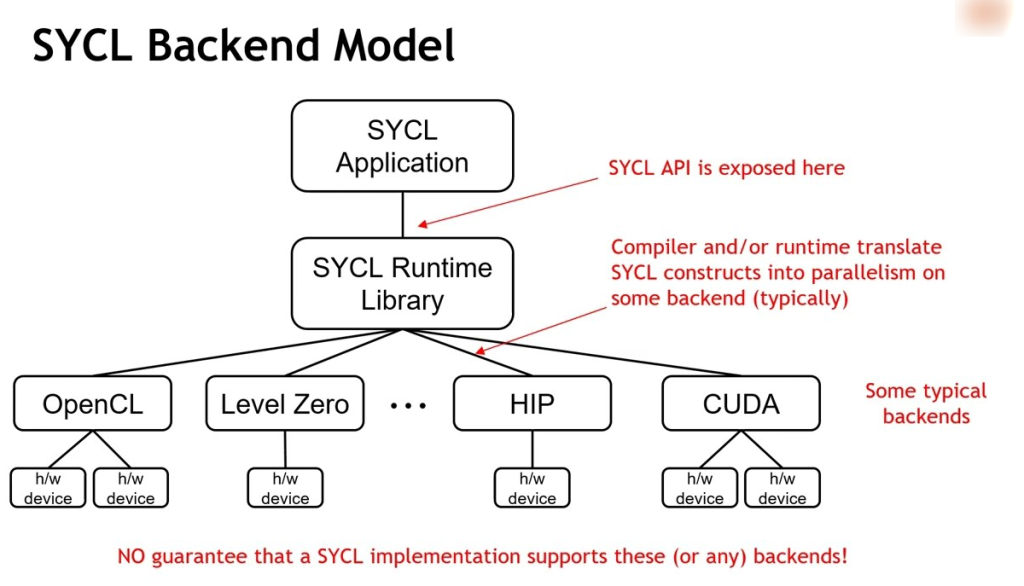

e. SYCL (C++-based Single-source Heterogeneous Programming Language)

- Language: SYCL is a higher-level abstraction built on top of OpenCL, using modern C++ features like lambda expressions and templates.

- Syntax: It simplifies the process of writing parallel programs for heterogeneous platforms (CPU, GPU, FPGA) and enables single-source C++ programming.

- Key Advantage: SYCL provides easier integration with standard C++ codebases and is portable across different devices and vendors.

- Toolchain Includes: Intel oneAPI and DPC++ compiler

f. Vulkan Compute (Maintained by Khronos Group)

Vulkan Compute is a low-level API designed for high-performance compute operations without the overhead of graphics pipelines.

Key Use Cases: Real-time compute workloads, Game engines and graphics-adjacent computation and Machine learning inference.

Toolchain Includes: Vulkan SDK and SPIR-V compiler tools.

g. HIP ( Developed by: AMD)

HIP allows developers to write portable GPU code that can run on both AMD and NVIDIA GPUs with minimal modification.

Key Use Cases: Portable HPC applications, Migration from CUDA to AMD platforms and Scientific computing.

Toolchain Includes: ROCm (Radeon Open Compute) platform, HIP compiler and runtime.

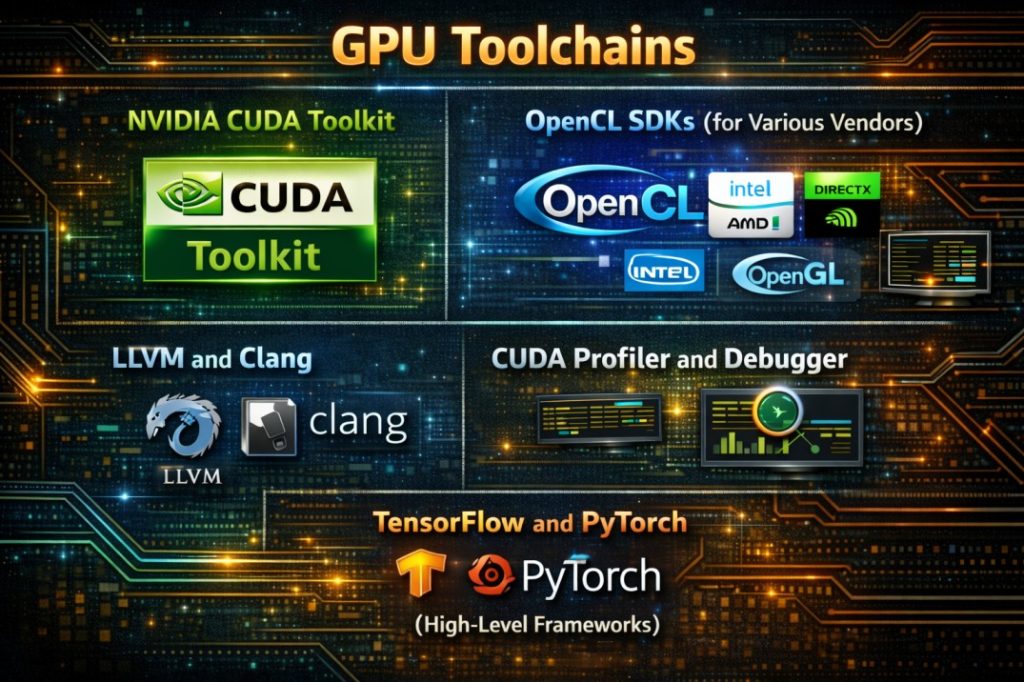

2. GPU Toolchains

GPU toolchains are the set of compilers, debuggers, and other utilities that facilitate GPU programming, debugging, and optimization.

a. NVIDIA CUDA Toolkit and Components:

- nvcc Compiler: The CUDA compiler for compiling CUDA programs.

- Libraries: cuDNN (for deep learning), cuBLAS (for linear algebra), cuFFT (for fast Fourier transforms), etc.

- Tools: NVIDIA Nsight for debugging and profiling, CUDA-GDB for debugging, and Visual Profiler for performance analysis.

- Platform: Exclusively for NVIDIA GPUs.

b. OpenCL SDKs (for Various Vendors)

- AMD ROCm (Radeon Open Compute): This is AMD’s open-source platform for GPU computing, which includes an OpenCL SDK and support for CUDA-like programming.

- Intel oneAPI: Intel’s cross-architecture development environment, which supports OpenCL and SYCL along with other APIs like DPC++ (Data Parallel C++) for accelerator programming.

- Khronos OpenCL SDK: The official toolchain provided by the Khronos Group (the maintainers of OpenCL). It includes tools for compiling, debugging, and optimizing OpenCL programs.

c. DirectX/OpenGL Tools

- DirectX (Windows): The DirectX suite includes Visual Studio integration and debugging tools specifically for GPU programming using DirectCompute and HLSL.

- OpenGL (Cross-Platform): While OpenGL is primarily a graphics API, it also provides capabilities for GPGPU tasks through shaders and compute programs.

d. LLVM and Clang for GPU Programming

- LLVM: LLVM is a set of modular and reusable compiler and toolchain technologies. It has support for compiling for GPUs (including OpenCL, CUDA, and other targets) through specific backends.

- Clang: Clang can be used with LLVM to compile GPU code, especially when working with SYCL or OpenCL.

e. CUDA Profiler and Debugger

- NVIDIA Nsight: An integrated development environment (IDE) for debugging and profiling CUDA applications. It provides powerful tools for analyzing performance bottlenecks and debugging GPU code.

- CUDA-GDB: A debugger specifically designed for debugging GPU code running on NVIDIA GPUs.

f. TensorFlow and PyTorch (High-Level Frameworks)

- TensorFlow: TensorFlow uses CUDA for leveraging NVIDIA GPUs. You can use TensorFlow for training deep learning models, and the backend will handle GPU utilization.

- PyTorch: Like TensorFlow, PyTorch uses CUDA for GPU acceleration. PyTorch also provides support for distributed training and easy integration with NVIDIA’s GPUs.

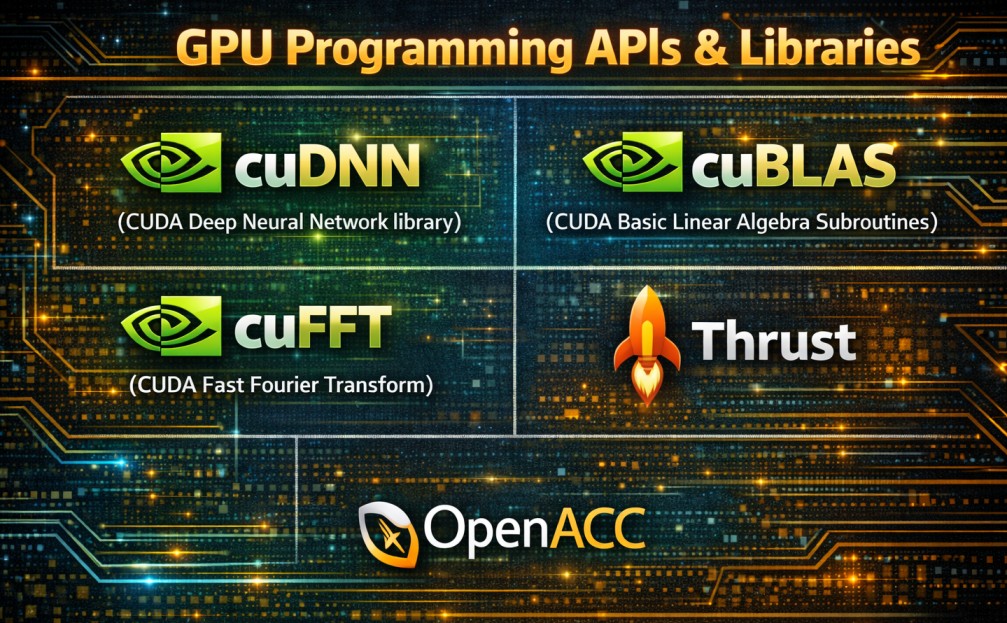

3. GPU Programming APIs & Libraries

These are high-level libraries and frameworks that abstract away much of the complexity of directly programming GPUs but still leverage GPU power.

a. cuDNN (CUDA Deep Neural Network library)

- A library from NVIDIA optimized for deep learning applications, providing high-performance primitives for convolution, pooling, and activation functions on GPUs.

b. cuBLAS (CUDA Basic Linear Algebra Subroutines)

- A high-performance library for linear algebra operations (matrix multiplication, etc.) optimized for GPUs.

c. cuFFT (CUDA Fast Fourier Transform)

- A library for efficient computation of FFTs on GPUs, particularly useful in signal processing, physics simulations, and image processing.

d. Thrust

- A parallel algorithm library that provides high-level abstractions for common parallel tasks (e.g., sorting, scanning, reductions), heavily optimized for NVIDIA GPUs.

e. OpenACC

- OpenACC provides compiler directives for parallelizing applications with minimal changes to code. It supports GPUs, accelerators, and multi-core CPUs. It’s more high-level than CUDA/OpenCL but offers excellent portability.

4. GPU Programming Best Practices

- Memory Management: GPUs have their own memory (device memory), which is typically smaller and faster than system RAM. Efficient memory allocation, data transfer, and memory access patterns are crucial for performance.

- Parallelization: GPUs excel in parallel processing. Effective use of threads, warps, and blocks in CUDA or similar constructs in OpenCL/Metal is key to maximizing performance.

- Optimization: Using techniques like loop unrolling, minimizing memory latency, and maximizing throughput is vital for high-performance GPU applications.

Conclusion

The landscape of GPU programming is diverse, with languages like CUDA, OpenCL, SYCL, and Metal offering different features and compatibility with various platforms. Toolchains such as the CUDA Toolkit, NVIDIA Nsight, and OpenCL SDKs provide powerful debugging, profiling, and performance optimization tools. The choice of language and toolchain depends on the hardware, the nature of the application, and your specific goals (e.g., general-purpose computation, machine learning, or graphics rendering).

A100 SXM4 40GB

A100 SXM4 40GB