Artificial intelligence and machine learning are no longer experimental technologies confined to research labs or innovation teams. Today, AI workloads are becoming core to day-to-day business operations, driving automation, decision-making, personalization, and real-time intelligence.

Industries across the board are adopting AI at scale:

- Healthcare for diagnostics, imaging, and predictive care

- Finance for fraud detection, risk modeling, and algorithmic trading

- SaaS for intelligent automation and customer insights

- Manufacturing for predictive maintenance and quality control

- Media and entertainment for content recommendations and generation

However, as AI moves into production, organizations are discovering a hard truth: traditional IT infrastructure was never designed for AI workloads. Legacy systems struggle with the scale, speed, and complexity required by modern AI models, leading to slow experimentation, failed proofs of concept, and limited business impact.

This is why enterprises must now rethink both data readiness and infrastructure readiness for AI.

AI Agents and the Rise of AI-Ready Data

AI agents have the potential to become indispensable tools for automating complex tasks. But bringing agents to production remains challenging.

Just like human workers, AI agents require secure, relevant, accurate, and recent data to deliver business value, which the industry now refers to as AI-ready data.

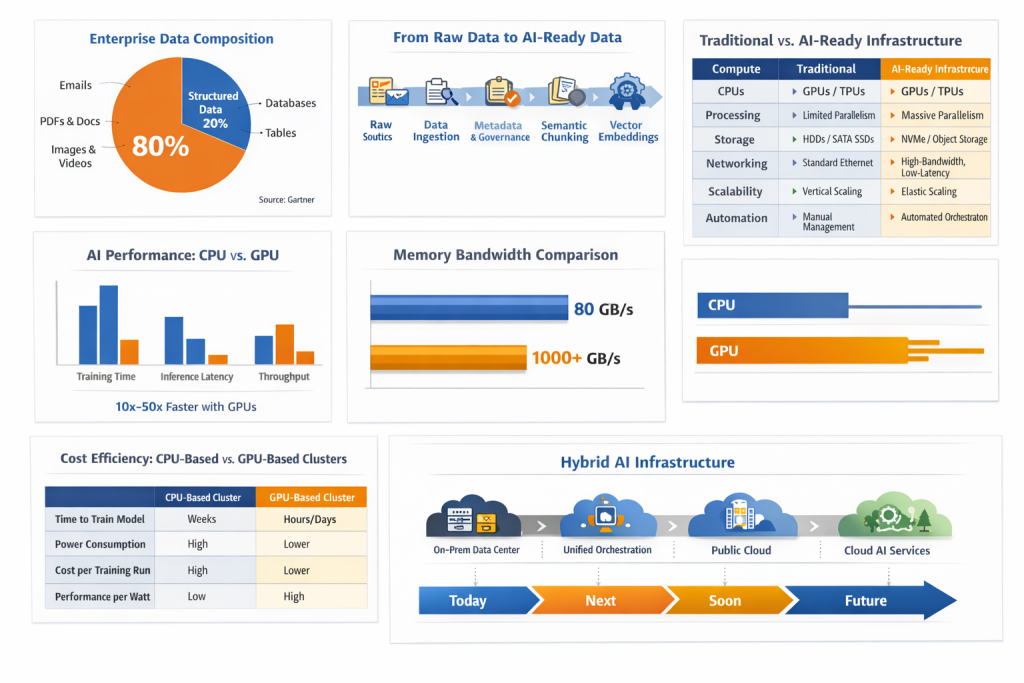

Making enterprise data AI-ready is especially difficult because most organizational data is unstructured. Gartner estimates that 70% to 90% of enterprise data is unstructured, including emails, PDFs, presentations, images, videos, and audio files. This data presents unique governance and scalability challenges due to its volume, variety, and lack of structure.

An emerging class of GPU-accelerated data and storage infrastructure, often referred to as AI data platforms, transforms unstructured data into AI-ready data quickly and securely, enabling AI agents and applications to operate reliably at scale.

What Is AI-Ready Data and AI-Ready Infrastructure?

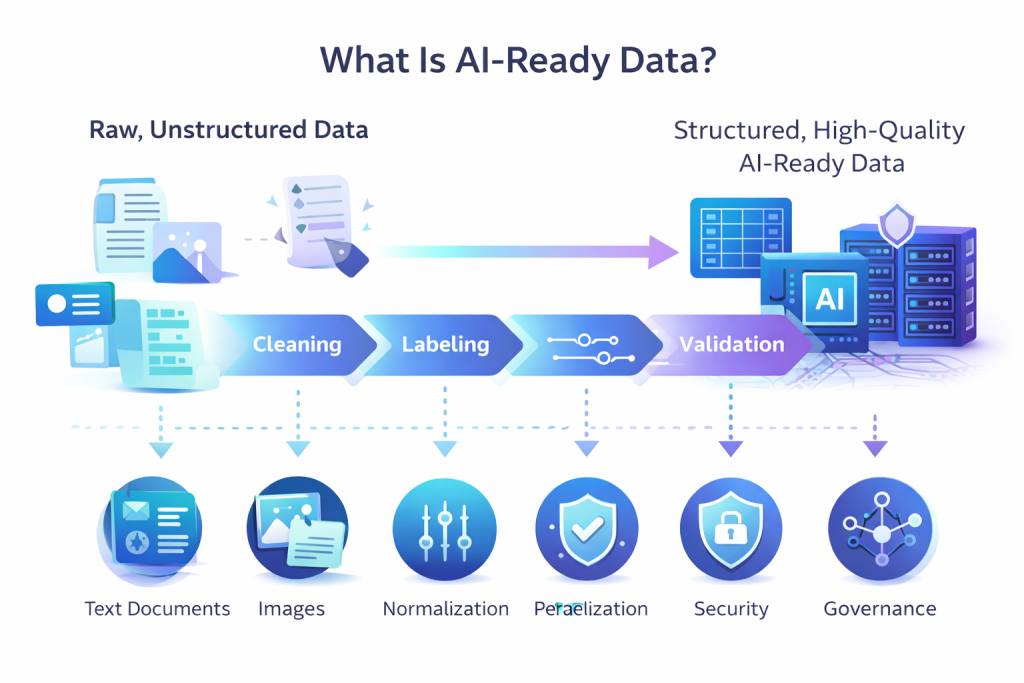

AI-ready data refers to data that can be directly consumed by AI systems for training, fine-tuning, and retrieval-augmented generation (RAG) without requiring additional preparation. It is structured, governed, and optimized so AI models can efficiently interpret and use it to generate accurate and reliable outcomes.

Most enterprise data is unstructured, such as documents, images, logs, and emails, and must be transformed before it can power AI initiatives. Making unstructured data AI-ready involves several critical steps:

- Collecting and curating data from diverse and distributed sources

- Applying rich metadata to enable data management, governance, and compliance

- Dividing source documents into semantically meaningful chunks

- Converting those chunks into vector embeddings for efficient storage, search, and retrieval

Without this preparation, organizations cannot fully realize the value of their AI investments. AI-ready data forms the foundation for high-quality model performance, faster insights, and trustworthy AI outputs.

However, data readiness alone is not sufficient. It must be supported by an AI-ready infrastructure—an architecture designed to handle the scale, complexity, and performance demands of modern AI workloads.

An AI-ready infrastructure must be capable of:

- Managing large volumes of both structured and unstructured data

- Executing complex algorithms and AI workloads in real time

- Scaling efficiently both horizontally and vertically to meet growing demands

- Providing high availability, fault tolerance, and robust security

Together, AI-ready data and AI-ready infrastructure enable organizations to deploy AI systems that are scalable, resilient, and production-ready. Today, AI is not just a technological advancement—it is a strategic necessity for enterprises seeking sustained innovation, operational efficiency, and long-term competitiveness.

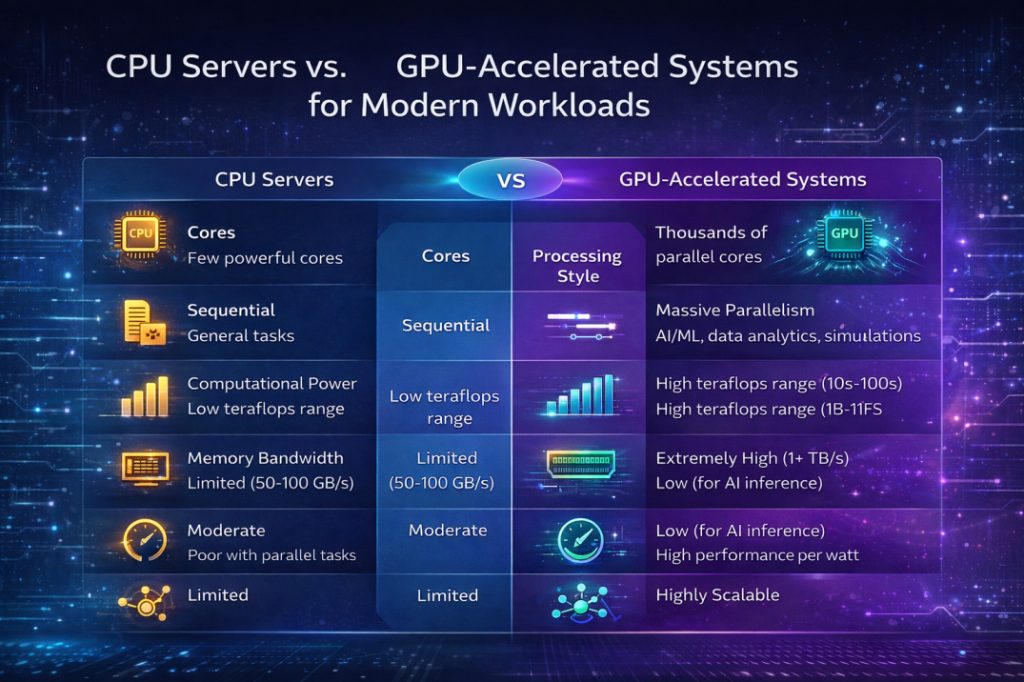

Here’s a detailed analysis of why GPU-accelerated systems outperform traditional CPU-based servers for modern workloads (especially AI, machine learning, data analytics, and real-time processing):

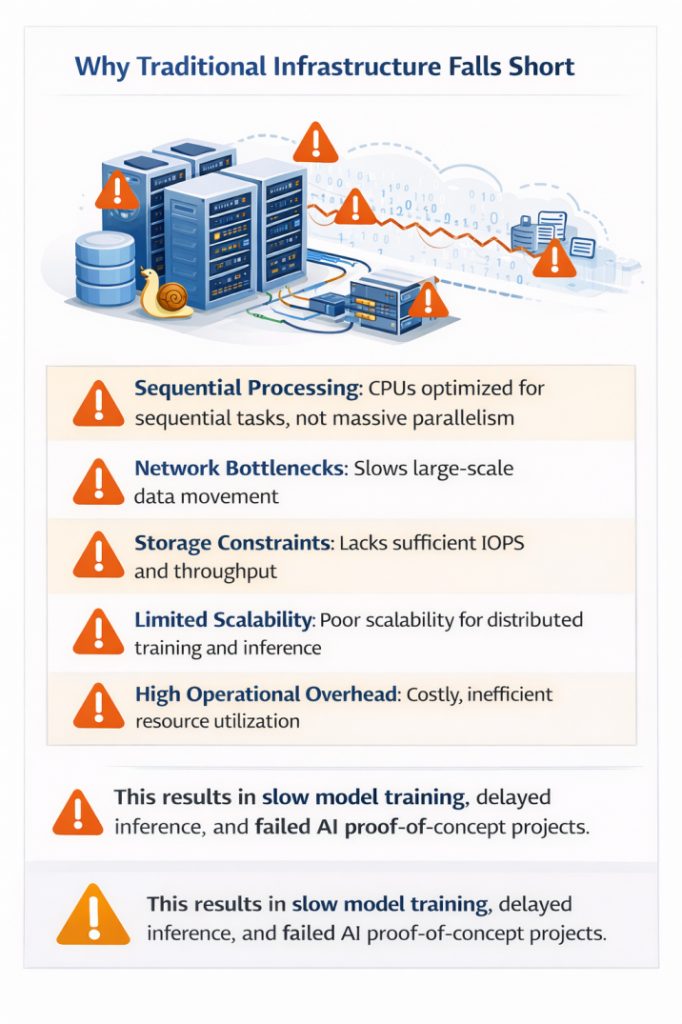

Why Traditional Infrastructure Falls Short

Traditional CPU-centric infrastructure struggles to meet AI demands due to several limitations:

- CPUs are optimized for sequential processing, not massive parallelism

- Network bottlenecks slow large-scale data movement

- Storage systems lack sufficient IOPS and throughput

- Poor scalability for distributed training and inference

- High operational overhead and inefficient resource utilization

In practice, this results in slow model training, delayed inference, and failed AI proof-of-concept projects, preventing AI from reaching production scale.

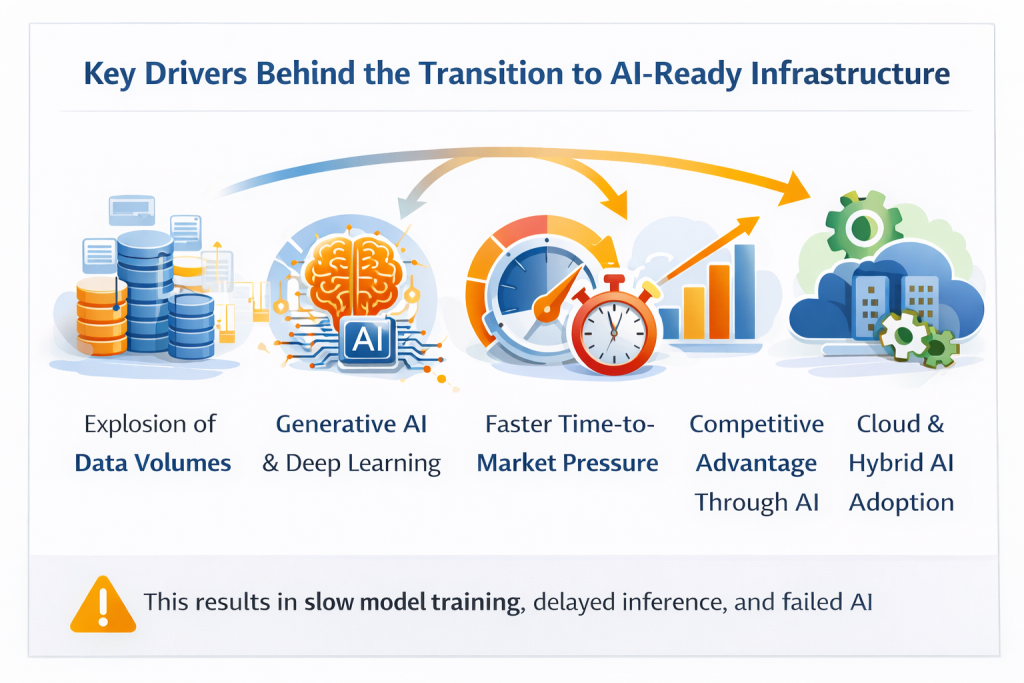

Key Drivers Behind the Transition to AI-Ready Infrastructure

Several forces are accelerating the shift:

- Explosive growth in data volumes

- The rise of deep learning and generative AI

- Pressure for faster time-to-market

- Competitive differentiation through AI-driven insights

- Widespread adoption of cloud and hybrid AI environments

Together, these drivers make AI-ready infrastructure a business necessity, not an optional upgrade.

Core Components of AI-Ready Infrastructure

a. Accelerated Compute

- GPUs vs CPUs for parallel workloads

- Dedicated vs shared GPUs

- Virtualized GPUs (vGPU, GPU pass-through)

GPUs excel at parallel processing, delivering massive throughput for AI training and inference.

b. High-Performance Storage

- NVMe and parallel file systems

- Object storage for unstructured data

- Data locality to reduce latency

c. High-Speed Networking

- Low-latency, high-bandwidth fabrics

- Critical for distributed training and real-time analytics

d. Cloud, Hybrid & On-Prem Models

- Cloud for elasticity and experimentation

- On-prem for control and compliance

- Hybrid models for flexibility and cost optimization

Why GPU-Accelerated Systems Outperform Traditional Servers

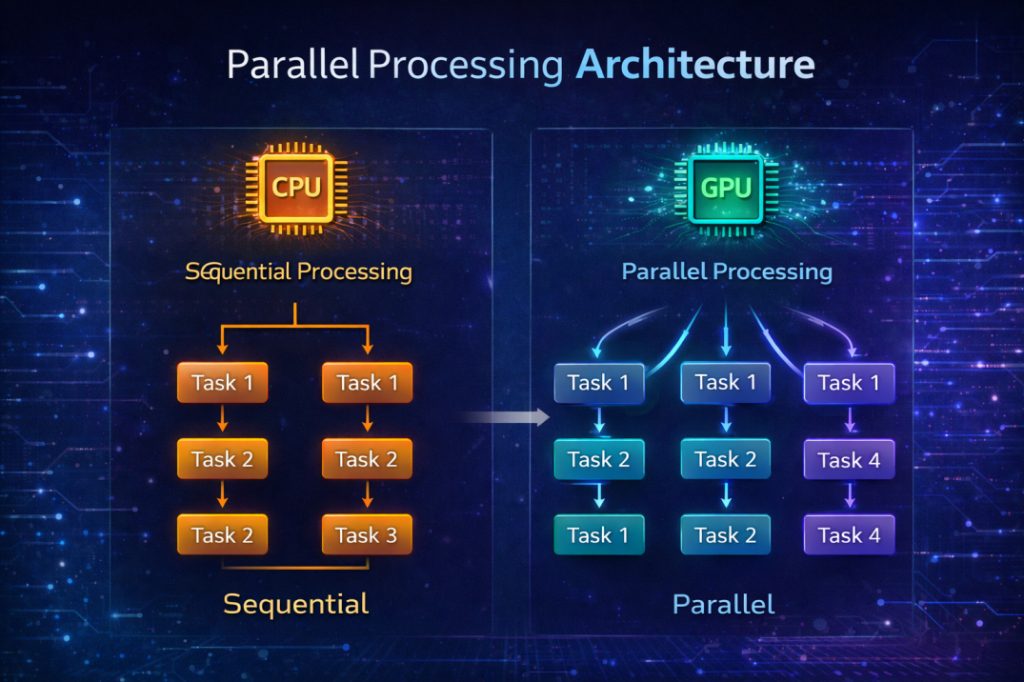

1. Parallel Processing Architecture

GPUs are built for massive parallelism. Unlike CPUs, which typically have few high-performance cores optimized for sequential tasks, GPUs contain hundreds to thousands of smaller cores designed to execute many operations simultaneously. This means:

- CPUs handle tasks one at a time in a sequential fashion.

- GPUs break down large computations (like matrix multiplications) into thousands of parallel operations.

Impact for Modern Workloads:

AI and deep learning models, especially neural networks, require repeated, parallel matrix and vector computations. GPUs handle these far more efficiently than CPUs, significantly reducing training and inference times.

2. Massive Computational Throughput

GPUs deliver much higher raw computational power than traditional CPU servers:

- High-end GPUs can achieve tens to hundreds of teraflops (trillions of floating-point operations per second), while CPUs are typically in the low single-digit teraflops range.

This huge difference in processing capacity translates to dramatically faster computing, especially for:

- Training large machine learning models

- Running simulations and scientific computations

- Processing big data analytics

Essentially, what might take weeks on a CPU cluster could be finished in hours with GPUs.

3. Higher Memory Bandwidth

Modern GPUs also have much higher memory bandwidth compared to CPUs:

- GPUs often exceed 1 TB/s memory throughput vs. around 50–100 GB/s on CPUs.

Why this matters: Large AI models need to move huge volumes of data between memory and processing units. High bandwidth avoids bottlenecks, maximizing GPU core utilization and accelerating overall workloads.

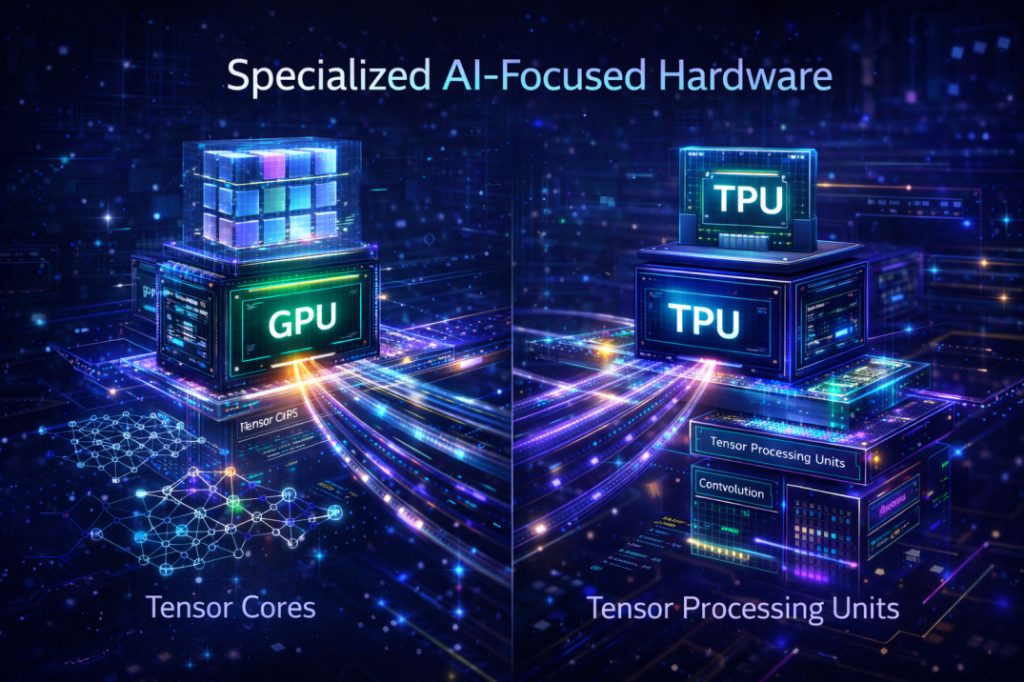

4. Specialized AI-Focused Hardware

GPUs include features tailored specifically for AI:

- Tensor Cores for accelerated mixed-precision matrix math (critical in deep learning)

- Optimized paths for common AI ops like convolutions and attention mechanisms

These aren’t just more cores; they’re specialized ones, meaning GPUs can perform AI tasks far more rapidly and efficiently than CPUs designed for general computing.

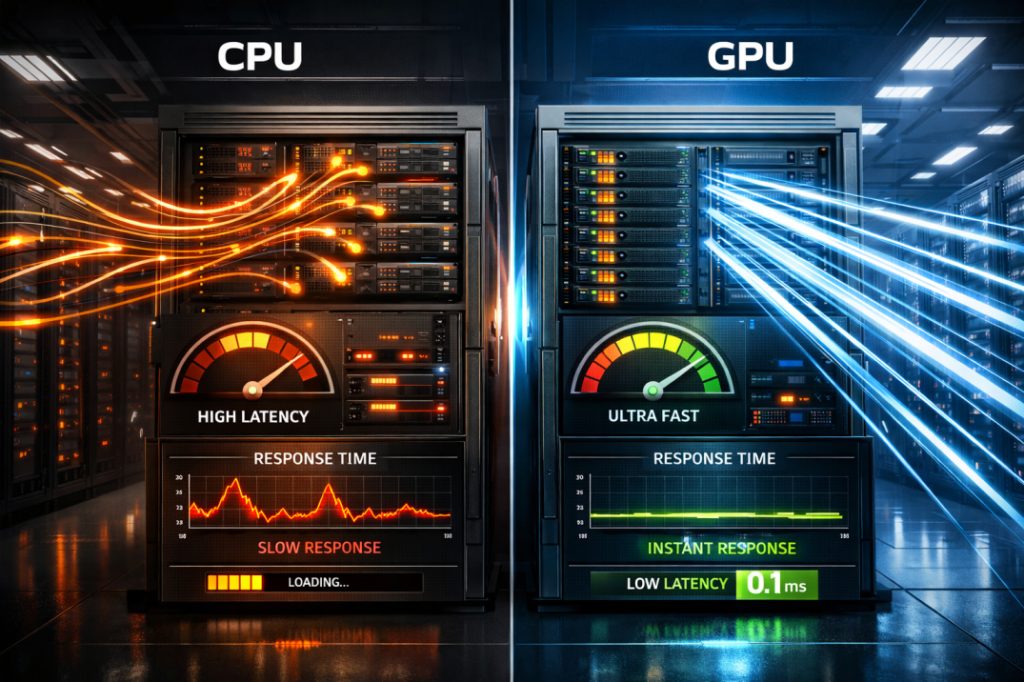

5. Real-Time and Low-Latency Performance

GPUs significantly reduce latency in inference tasks where fast responses are crucial:

- For tasks like autonomous driving, real-time analytics, or responsive chatbots, GPUs can deliver sub-100 ms results vs much slower CPU inference.

This makes GPU acceleration essential not just for training models, but for deploying them in production environments where quick results matter.

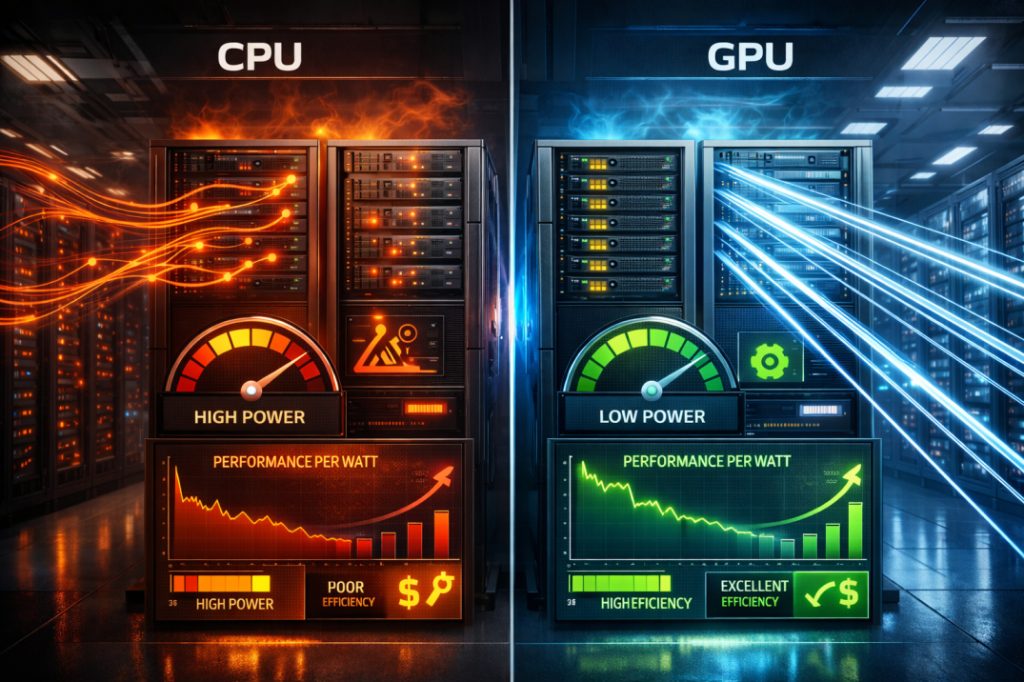

6. Energy Efficiency & Cost Effectiveness

Even though GPU systems may cost more upfront:

- They complete workloads faster

- They deliver better performance per watt than CPUs for parallel tasks

- They reduce operational costs (power, cooling, time to market)

For large AI jobs, this means the total cost of ownership can be lower with GPUs than with many CPU servers.

7. Scalability & Modern Workload Support

GPUs scale well across clusters and cloud environments:

- Multiple GPUs can work together on large models or datasets

- Cloud GPU offerings allow elastic scaling based on demand

- GPU clusters support distributed training and real-time analytics

This flexibility is crucial as enterprises adopt AI at scale—traditional CPU servers struggle to match this level of scalability.

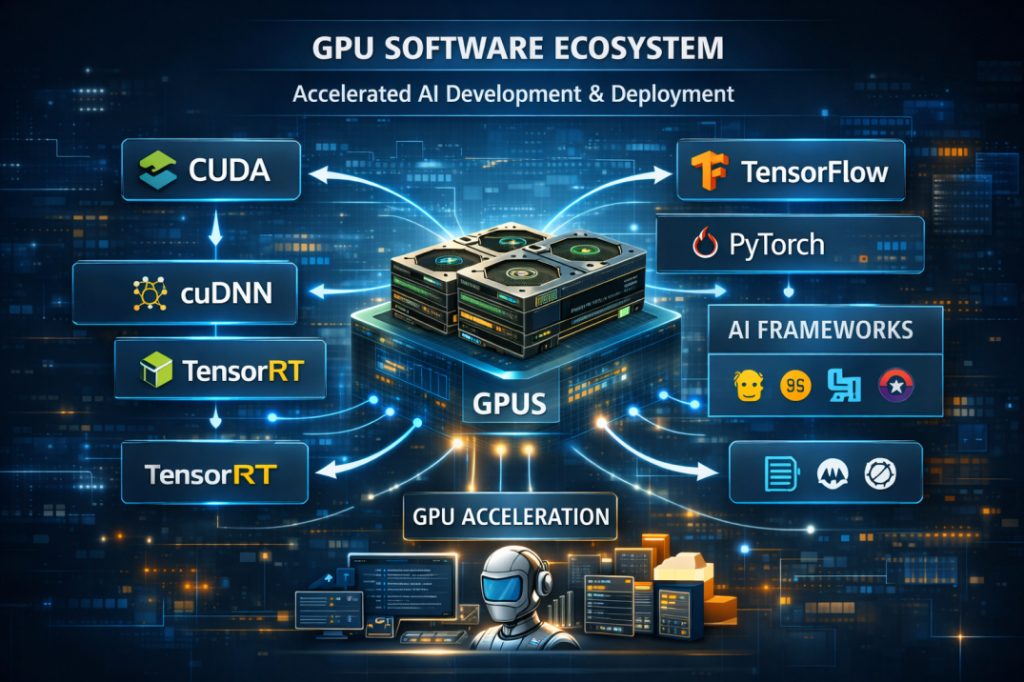

8. Software Ecosystems & Developer Support

GPUs aren’t just hardware; they’re part of a rich ecosystem:

- CUDA, cuDNN, TensorRT (NVIDIA)

- Optimized support in AI frameworks like TensorFlow, PyTorch, and many others

This ecosystem accelerates development and deployment, making it easier to build advanced models with GPU acceleration.

Key Components of an AI-Ready Infrastructure

To support modern AI workloads, infrastructure must be built on a few essential technology pillars that enable performance, scalability, and security.

1. High-Performance Computing

AI and machine learning models require massive computational power to process data and train models efficiently.

GPUs are the backbone of AI workloads due to their ability to perform large-scale parallel processing. In some cases, TPUs and FPGAs are also used to accelerate specific AI operations. High-performance servers with multi-core CPUs and hardware accelerators enable real-time inference and faster model training.

Example: Autonomous driving, voice recognition, and image analysis models rely on GPU clusters for real-time performance.

2. Fast and Flexible Storage

AI workloads depend on rapid access to large volumes of data. NVMe storage delivers ultra-low latency and high throughput, while object storage efficiently manages unstructured data such as images, videos, and documents. Distributed file systems help scale storage performance across multiple servers.

Example: E-commerce platforms use NVMe storage to improve response times and customer experience.

3. Hybrid Cloud Infrastructure

An AI-ready environment combines the flexibility of public cloud with the security and control of private infrastructure. Container platforms like Kubernetes enable consistent AI model deployment across environments, while hybrid and multi-cloud strategies allow workloads to move dynamically based on performance, cost, and compliance needs.

Example: Financial organizations use hybrid clouds to run sensitive AI workloads on-premises while scaling in the public cloud during peak demand.

4. Intelligent and Scalable Networking

High-speed, low-latency networking is critical for data-intensive AI workloads. Software-Defined Networking (SDN) enables automated traffic management, while AI-optimized networks support high-bandwidth data transfer. Edge computing and 5G further reduce latency by processing data closer to the source.

Example: Streaming platforms use intelligent networks to optimize content delivery based on real-time user behavior.

5. AI-Enhanced Cybersecurity

AI systems are exposed to threats such as data poisoning and model manipulation. An AI-ready infrastructure must include intelligent security controls such as AI-powered intrusion detection, Zero Trust access models, and automated incident response to protect data and models.

Example: Enterprises use AI-driven security analytics to detect anomalies and respond to threats in real time.

Business Benefits of Moving to AI-Ready Infrastructure

- Faster model training and inference

- Lower infrastructure cost per workload

- Improved scalability and flexibility

- Higher developer productivity

- Future-proof IT investments

AI-Ready Infrastructure and Cost Optimization

- Right-sizing GPU resources

- Balancing on-demand vs reserved capacity

- Avoiding over-provisioning

- Improving energy efficiency and sustainability

Security, Governance, and Compliance Considerations

- Data privacy in AI pipelines

- Secure access to GPUs and datasets

- Model governance and auditability

- Compliance with industry regulations

Common Challenges in the Transition

- High upfront investment

- Skills and talent gaps

- Integration with existing systems

- Choosing the right hardware and vendors

- Managing AI workloads at scale

Best Practices for Transitioning to AI-Ready Infrastructure

- Start with pilot AI workloads

- Assess infrastructure gaps

- Design scalable, modular architectures

- Invest in automation and orchestration

- Partner with experienced AI infrastructure providers

Real-World Use Cases

- AI-powered analytics platforms

- Computer vision systems

- Generative AI applications

- Recommendation engines

- Predictive maintenance

Future Outlook: What’s Next for AI Infrastructure?

- AI-native data centers

- Specialized AI accelerators

- Edge AI infrastructure

- More efficient and sustainable AI systems

Conclusion:

AI-ready infrastructure is no longer optional. It is a strategic enabler that determines how effectively organizations can innovate, compete, and scale in an AI-driven world.

Enterprises that invest now in AI-ready data and GPU-accelerated infrastructure will unlock faster insights, lower costs, and long-term competitive advantage, while those that delay risk falling behind.

A100 SXM4 40GB

A100 SXM4 40GB